Building Trust in AI

The Foundation for Responsible and Scalable Innovation

Organizations today face a critical challenge: scaling AI without losing trust.

AI is no longer limited to experimentation—it is actively shaping decisions across industries, from loan approvals in banking to diagnostics in healthcare and supply chain optimization in manufacturing. But as AI becomes deeply embedded in business operations, the stakes are higher than ever.

A single biased prediction, data breach, or lack of transparency can erode customer confidence and trigger regulatory risks.

This is why AI success today is not just about accuracy or speed—it’s about trust, accountability, and control at scale.

At ISmile Technologies, we help enterprises move beyond experimentation by building cloud-native, secure, and governed AI ecosystems powered by Microsoft Azure.

Why Trust is the Real Bottleneck in AI Adoption

Despite significant investments in AI, many organizations struggle to scale beyond pilot projects. The reason is not technology—it’s trust.

Key challenges include:

- Lack of transparency in AI decision-making

- Data privacy and security concerns

- Bias in models and inconsistent outcomes

- Absence of governance across the AI lifecycle

Without trust, AI adoption slows down. Stakeholders hesitate, regulators intervene, and innovation stalls.

To overcome this, organizations must embed trust directly into their AI architecture—not treat it as an afterthought.

How Enterprises Can Operationalize Trust in AI

Building trusted AI requires a combination of technology, governance, and process integration.

Here’s how enterprises can make it practical:

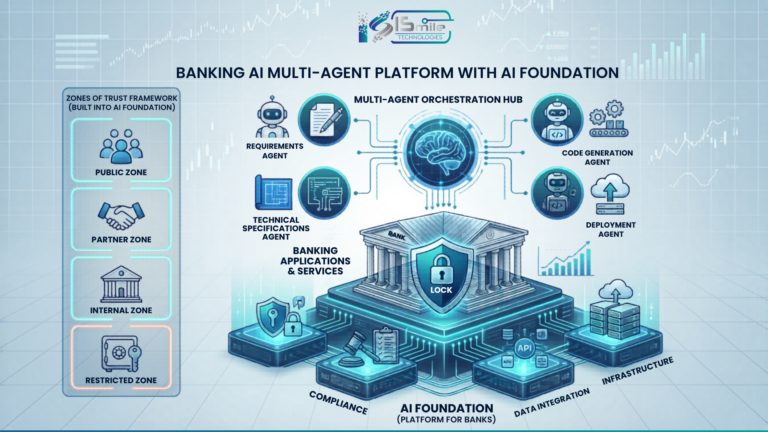

1. Start with a Cloud-Native AI Foundation

Modern AI systems require scalable and secure infrastructure. Platforms like Microsoft Azure enable organizations to build AI solutions with:

- Built-in compliance and security controls

- Scalable compute for model training and inference

- Integrated AI services and development tools

ISmile Technologies helps organizations design and deploy Azure-based AI architectures that are secure, flexible, and enterprise-ready.

2. Implement End-to-End Governance

Trust in AI comes from visibility and control.

Organizations must establish:

- Data lineage and ownership

- Model validation and approval workflows

- Continuous monitoring for drift and bias

- Audit trails for compliance

ISmile Technologies enables governance frameworks that align with global standards such as GDPR, HIPAA, and ISO—ensuring accountability at every stage.

3. Secure Data with Confidential Computing

AI systems often rely on sensitive data, making security a top priority.

Using Microsoft Azure Confidential Computing with advanced processors, organizations can:

- Encrypt data during processing

- Protect intellectual property and customer data

- Enable secure collaboration across teams

This approach ensures that data remains protected at rest, in transit, and in use—a critical requirement for regulated industries.

Key Pillars for Scalable and Responsible AI

To scale AI responsibly, enterprises must focus on three core pillars:

Transparency and Explainability

AI systems must provide clear insights into how decisions are made, enabling trust among users and regulators.

Security and Privacy

Robust infrastructure ensures sensitive data is protected throughout the AI lifecycle.

Continuous Monitoring and Improvement

AI models must be regularly evaluated to ensure accuracy, fairness, and performance over time.

Together, these pillars create a foundation where AI systems are not only powerful—but also reliable and accountable.

Responsible AI in Cloud-Native Architectures (Key Differentiator)

As organizations move to cloud-native environments, responsible AI takes on a new dimension.

Cloud platforms like Azure introduce:

- Distributed data environments

- Real-time processing pipelines

- Multi-tenant architectures

This requires embedded governance and security at the infrastructure level, not just at the application level.

ISmile Technologies helps organizations implement:

- Policy-driven automation for compliance

- Secure AI pipelines across cloud and hybrid environments

- Integrated monitoring across data, models, and infrastructure

This ensures that responsibility is built into the system—not added later.

How Generative AI Changes Governance Requirements

The rise of Generative AI introduces new challenges:

- Risk of hallucinations and misinformation

- Lack of explainability in large models

- Data leakage through prompts and outputs

To address this, organizations must:

- Implement guardrails and content filtering

- Monitor outputs in real time

- Establish human-in-the-loop validation

- Enforce strict data access policies

ISmile Technologies helps enterprises deploy safe and governed GenAI solutions that balance innovation with control.

Industry Use Cases: Trust in Action

Healthcare (Pharma & Life Sciences)

AI models assist in diagnostics and drug discovery—but require strict compliance with patient data regulations. ISmile enables secure AI environments with full auditability and data protection.

Financial Services

AI-driven credit scoring and fraud detection demand transparency and fairness. ISmile ensures explainable models and governance frameworks to meet regulatory requirements.

Retail & Supply Chain

AI improves demand forecasting and personalization. ISmile helps unify data pipelines and implement real-time monitoring to ensure accuracy and reliability.

The ISmile Technologies Approach: From Data to Deployment

ISmile combines cloud, data, and AI expertise to help enterprises operationalize responsible AI.

Our approach includes:

- Designing Azure-based AI architectures

- Implementing governance and compliance frameworks

- Securing data with confidential computing

- Enabling real-time monitoring and optimization

- Supporting end-to-end AI lifecycle management

This ensures that AI systems are not only scalable—but also secure, ethical, and trustworthy.

Conclusion

As AI becomes the backbone of enterprise decision-making, trust will define its success.

Organizations that prioritize responsible AI can innovate faster, reduce risk, and build lasting confidence with customers and regulators.

The future of AI is not just intelligent—it is secure, transparent, and accountable.

With ISmile Technologies, enterprises can move beyond experimentation and build AI systems that are trusted, scalable, and ready for the real world.

Frequently Asked Questions (FAQs)

1. What does it mean to operationalize trust in AI?

It means embedding governance, security, and transparency into every stage of the AI lifecycle—from data collection to model deployment and monitoring.

2. Why is trust critical for scaling AI?

Without trust, organizations face resistance from stakeholders, regulatory challenges, and reduced adoption of AI systems.

3. How does Microsoft Azure support Responsible AI?

Azure provides built-in security, compliance, confidential computing, and AI services that help organizations develop and deploy trusted AI solutions.

4. What is confidential computing in AI?

It is a security approach that protects data during processing by encrypting it in secure environments, ensuring end-to-end data protection.

5. How does Generative AI impact governance?

Generative AI introduces risks like hallucinations and data leakage, requiring stronger monitoring, validation, and control mechanisms.

6. What industries benefit most from Responsible AI?

Highly regulated industries like healthcare, finance, and pharmaceuticals benefit significantly due to strict compliance and data security requirements.

7. What are the risks of not implementing Responsible AI?

Risks include biased outcomes, data breaches, regulatory penalties, and loss of customer trust.

8. How can organizations start their Responsible AI journey?

They can begin by adopting cloud-native infrastructure, implementing governance frameworks, securing data, and enabling continuous monitoring.

9. How does ISmile Technologies differentiate in AI implementation?

ISmile Technologies combines cloud, AI, and governance expertise with real-world implementation experience, enabling scalable and secure AI adoption.

10. Can Responsible AI slow down innovation?

No—when implemented correctly, it accelerates innovation by reducing risks and increasing confidence in AI systems.